On 30th June, 2020 IBM officially launched the latest DB2 release, DB2 Nebula 11.5.4. The Triton midrange team has been exploring some of the exciting features that the new DB2 release has to offer. In this series we take a look at our DB2 11.5.4 feature highlights and include our initial first impressions.

In the third in our series Mark Gillis looks at 11.5.4 Nebula BLU Enhancements.

11.5.4 Nebula BLU Enhancements

Column-organized data has been around for a while now; since v10.5, but I think it’s fair to say that it’s first iteration had a number of glitches. Part of it’s selling point is that it provides extremely aggressive data compression (actually encoding) and that that compression persists in memory, i.e. the query doesn’t need to unpack the data and write it into bufferpools in un-compressed format, thereby flushing out a lot of other data that you might still need. This is accomplished by building a compression dictionary at LOAD time, but it only works for some data types; notably not string types like VARCHAR. As these can be where a large percentage of the data is stored, this can mean that compression is not working optimally. One of the 11.5.4 Nebula BLU enhancements that has recently been introduced addresses this. Published details are here: V11.5 Column-organized table variable but there are glitches and gotchas to consider.

String / Varchar compression

Data stored as Char or VarChar, Graphic or VarGraphic and Binary or VarBinary can now be compressed at the page level. Previously these data types were not targeted by adaptive compression which could leave quite large volumes of data uncompressed, in a column-organized table.

It is used during bulk insert processing to reduce storage but is non-dictionary based and will decompress data during query processing. This will have a performance impact as any query accessing the data will have to decompress it, as it comes off disk.

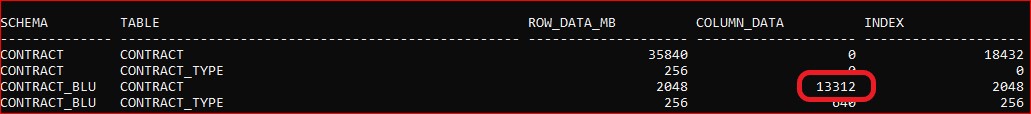

As an example, I have a column-organized table here called CONTRACT in a schema called CONTRACT_BLU. After initial LOAD (and with no VARCHAR data types in the table) the column data is 13,312 KB

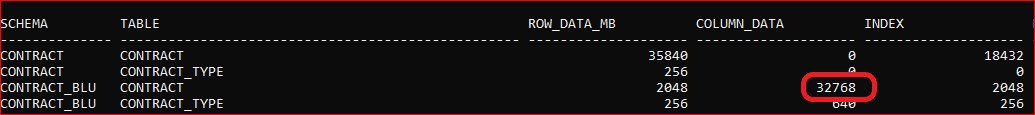

I alter the table to add a column with a definition of VARCHAR(1024) and populate it with data, whilst the registry variable is still set to DB2_COL_STRING_COMPRESSION=UNENCODED_STRING:NO

This results in the footprint of the data increasing by 19,456 KB:

If that operation is repeated; adding another VARCHAR(1024) column and populating it, with the registry variable set to DB2_COL_STRING_COMPRESSION=UNENCODED_STRING:YES (remembering again to restart the instance so that the setting takes effect), the footprint increases by 29,696 KB! That’s not what we wanted; the result with the compression switched on is worse than with it switched off!

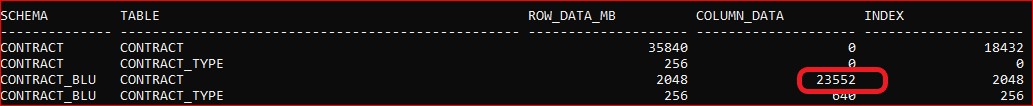

The catch is that “Existing tables will need to be unloaded and reinserted to take advantage of this feature”. This can be demonstrated by unloading the data, truncating the table and then doing a LOAD … INSERT. The data footprint now shows an increase of just 10,240 KB for both the new VARCHAR columns (i.e. 23,552 minus the original 13,312 KB):

Enabling DB2_COL_STRING_COMPRESSION is a fallback-incompatible feature. Setting this variable to “UNENCODED_STRING:YES” will cause tables in the database to be incompatible with any releases prior to Version 11.5 Mod Pack 4 because this feature changes the on-disk table structure. Falling back to any prior release after setting this variable to “UNENCODED_STRING:YES” may trigger a SQL1784N error when accessing column-organized tables.

A good feature but one that needs careful handing to realize the benefits.

Please click here for Part 2 of 11.5.4 Nebula BLU Enhancements or have a browse around our Technical Blogs for more info on V11.5.4 Nebula